The Future is for the Experimentalist.

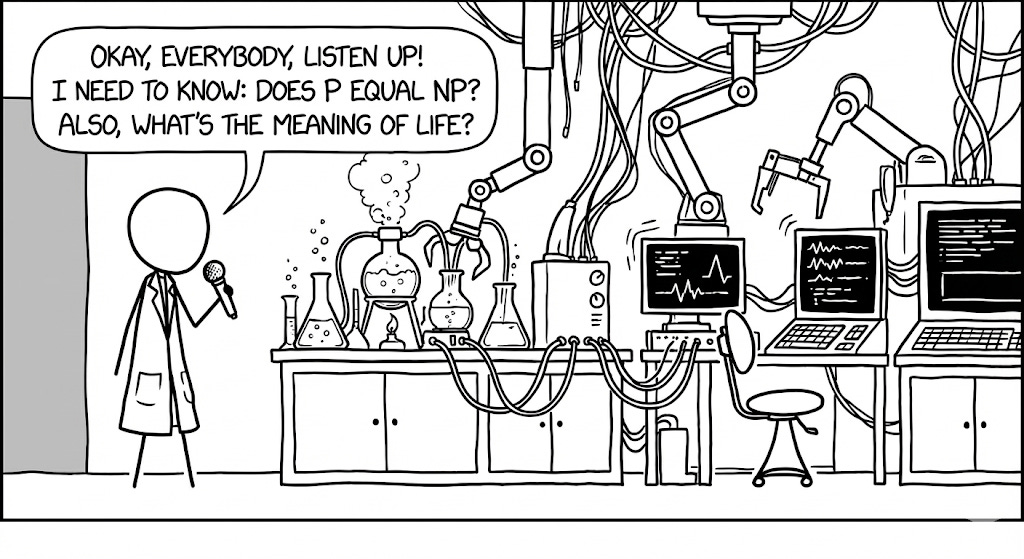

AI isn’t your scientist, it’s your lab. A lab without scientists is a glorified storage closet.

My favorite way to think about People Analytics is as a research and development team focused on understanding a specific company. Like any high-performing R&D team, a high-performing People Analytics team is highly interdisciplinary and takes advantage of a wide range of educational backgrounds. What makes such a group a coherent team isn’t a shared degree, skill set, or a shared tech stack; it is a shared obsession with figuring things out.

The unfortunate legacy of the past decade’s obsession with AI (Machine Learning and LLMs) is that People Analytics teams have been rewarded for ‘black box’ approaches. We have created a generation of practitioners who are better at getting a result that works than explaining why it works. The value of this flavor of People Analytics is actively eroding as LLMs become just as proficient at throwing spaghetti at the wall as humans.

As we move deeper into the AI era, the future of People Analytics will not be driven by Data Scientists, IO Psychologists, or Data Engineers who have mastered the syntax of their tools, but by experimentalists who master the art of the experiment. We need more scientists.

The Pursuit of Knowing

Some amount of the skill-gap we are about to experience is the result of an age old problem. Screenwriters and novelists have created a widespread misconception that scientists are people that know lots of things. This ubiquitous misconception has led to common oxymorons like “exact science” and even the misuse of the word scientist altogether. Very few data scientists seem to know anything about how to do science.

To set the record straight, scientists are not people that know lots of things; scientists are people that ask lots of things. They are people who care more deeply about the quality of an answer than having the answer.

This distinction between knowing and questioning is a lesson many of us learn the hard way. In Zen and the Art of Motorcycle Maintenance, Robert Pirsig indirectly describes a great scientist when he writes about the concept of “Quality”:

“Care and Quality are internal and external aspects of the same thing. A person who sees Quality and feels it as he works is a person who cares. A person who cares about what he sees and does is a person who’s bound to have some characteristic of Quality.”

In his own way Richard Feynman described this same person when we recounted the labor required to truly “know” something:

“I don’t know what’s the matter with people: they don’t learn by understanding; they learn by some other way—by rote or something. Their knowledge is so fragile! ... You have to work very hard to know if you know. You have to check it, and you have to think about it, and you have to see if it’s really true.”

Learning how to know something is a painful process of trial and error.

The Eigenvector and the Cardiologist

Years ago, before “Data Science” was a phrase anyone would recognize, I was a young researcher in the UC Davis Applied Science department, an applied physics program that had been founded by Edward Teller to build future generations of great experimentalists. The department was small but the faculty were brilliant and highly interdisciplinary. The lab I worked in was located on the medical campus where I was surrounded by MDs, biologists, chemists, and engineers who all knew much more than me about pretty much everything. I knew some math, and I knew how to run experiments.

In addition to my work in non-linear optical microscopy I would take on small side projects with the medical school faculty. At one point I was collaborating with a cardiologist to see if we could distinguish diseased heart tissue from healthy tissue at the cellular level using light scattering. He would provide the samples, while I would take the measurements on a microscope that our lab had built and provide the statistical analysis.

At the time, Principal Component Analysis (PCA) was the ‘it’ technique. It seemed like magic: you didn’t really have to know anything about your data, you just put it in and presto, you could easily classify groups with subtle differences. When I figured out, despite all the jargon, that it was just linear algebra I got very excited about using it for this problem with the heart cells.

I wrote a simple program to analyze my data and when I plotted the first two principle components I felt like a genius. The diseased cells and healthy cells showed up clear as day. I enthusiastically put together my results and scheduled time with the cardiologist to share the discovery. The next day I proudly sat in his office as I watched his initial excitement fade into confusion.

“This is wonderful,” he said. “But what does it mean?”

I launched into a technical explanation: “Well, you see, this axis is the first eigenvector of the covariance matrix, so it rotates the n-dimensional data so the direction with the most variance lines up with the x-axis…”

I trailed off as I saw his blank stare.

“Right,” he said. “That’s what you did. But what does it mean? Can you tell me anything about how the cells are different?”

I couldn’t. I had no idea.

In the end, that PCA analysis was just the first step in a series of experiments that produced a much more useful result. The final result was actually quite simple. Getting to a simple answer takes a tremendous amount of work.

This was one of my first hard lessons in the difference between getting an answer and knowing something. As the professional world grapples with the implications of LLMs we will only see this gap grow.

AI is the Lab, Not the Scientist

In the AI era, access to facts is a commodity. Hiring people that know things is less important than hiring people who know how to figure out things. The value of the experimentalists is rapidly escalating.

The LLM is not a scientist, it is a laboratory where your best experimentalists will thrive. It’s a room full of amazing tools, a playground for a curious mind. Confusion about the role of an LLM as a lab will leave you vulnerable to churning out enterprise-grade AI “slop”.

To take advantage of this amazing laboratory you will need amazing experimentalists and there won’t be any single degree that serves as a reliable proxy for this talent. While IO Psychologists and Data Scientists will continue to bring vital tools, you will need an equal mix of physicists, chemists, biologists, economists, and others who have mastered these experimentalist skills in unique contexts:

First Principles Thinking: A good experimentalist doesn’t try to answer complex questions; they know it’s much easier to answer simple ones. They know how to break problems down into their fundamental parts and identify likely independent variables. If you ask them about turnover, they don’t immediately suggest a “Logistic Regression.” They start asking about the fundamental “forces” acting on an employee: incentive, competition, and aspiration.

Experimental Design: Experimentalists know how to creatively test an idea using the simplest possible approach. They test the idea, not just the available data. When the problem is messy, this often requires some highly creative thinking to identify the linchpin assumption upon which a conclusion is built and to create a scenario that challenges or supports that assumption. They know that data in support of an assumption isn’t proof; it’s evidence.

Creative Instrumentation: Rather than relying solely on the tools and data provided, a good experimentalist builds the tools to get the data they need. If the “sentiment data” isn’t good enough, they find a way to measure the network or the friction in a process, tinkering with different approaches until they get what they need. They aren’t afraid of making anything. They also aren’t afraid of repurposing tools they have in new and creative ways. What’s really the difference between a survey, an assessment, and a performance review, anyway?

Mathematical Reasoning: Good experimentalists bring a deep intuition for the math behind their measurements. They know that statistical significance is not the same thing as a significant result and apply that understanding in their work. They can often give real-world examples to explain the math they are doing on the spot because they’ve tried and failed and tried again to implement their recommendations.

People Research & Development

By embracing a culture of R&D, AI will become a tremendous springboard for People Analytics. AI will be a high-powered, high-speed environment where our interdisciplinary teams can flourish.

In the end, the cardiologist’s question—“But what does it mean?”—will be the mantra that keeps us from descending into a collective AI-induced hallucination. The most important priority we can hold is the quality of our answers.

The People Analytics teams that become a strategic advantage for their companies will be willing to do the hard labor of checking, thinking, and verifying until they know.