The Technology Almost Always Fails Last

Why Organizations Can't See What's Killing Them: And Who Can

In the 2014 comedy A Million Ways to Die in the West, Seth MacFarlane plays a cowardly sheep farmer in 1880s Arizona who can’t stop cataloging all the ways the frontier is trying to kill everyone. He’s worried about outlaws, bar fights, rattlesnakes, dysentery, poorly aimed gunfire, and the lack of indoor plumbing. Everything is dangerous, nobody is paying attention, and the people who survive are the ones who stay scared.

Andy Grove, the former Intel CEO, put it more seriously: “Success breeds complacency. Complacency breeds failure. Only the paranoid survive.”¹ Bill Marriott built the world’s largest hotel company on the creed that serves as the title of his autobiography: “Success is never final — as soon as a person thinks it is, that person fails.”² Great teams never let their guard down, because innovation is the wild west and the complexity you’ve accumulated, the shortcuts you’ve normalized, and the assumptions you’ve canonized are out to get you.

What follows are lessons extracted from 17 systemic failures across modern industry. The cases span aerospace, nuclear energy, oil and gas, aviation manufacturing, construction, medical devices, cybersecurity, financial systems, government IT, and consumer infrastructure. They are not the only such failures or even necessarily the worst. They are chosen because they are well-documented, span different industries and decades, and collectively reveal patterns that no single case could. Some killed hundreds of people. Some killed no one but destroyed billions of dollars in value. Every single one was preventable. Every single one had warning signs that were ignored.

These disasters could be viewed as a catalog of independent pathologies: poor communication here, inadequate testing there, a bad incentive somewhere else. The focus of this article is the recurring patterns across all 17 cases that suggest a common structural property of organizations themselves.

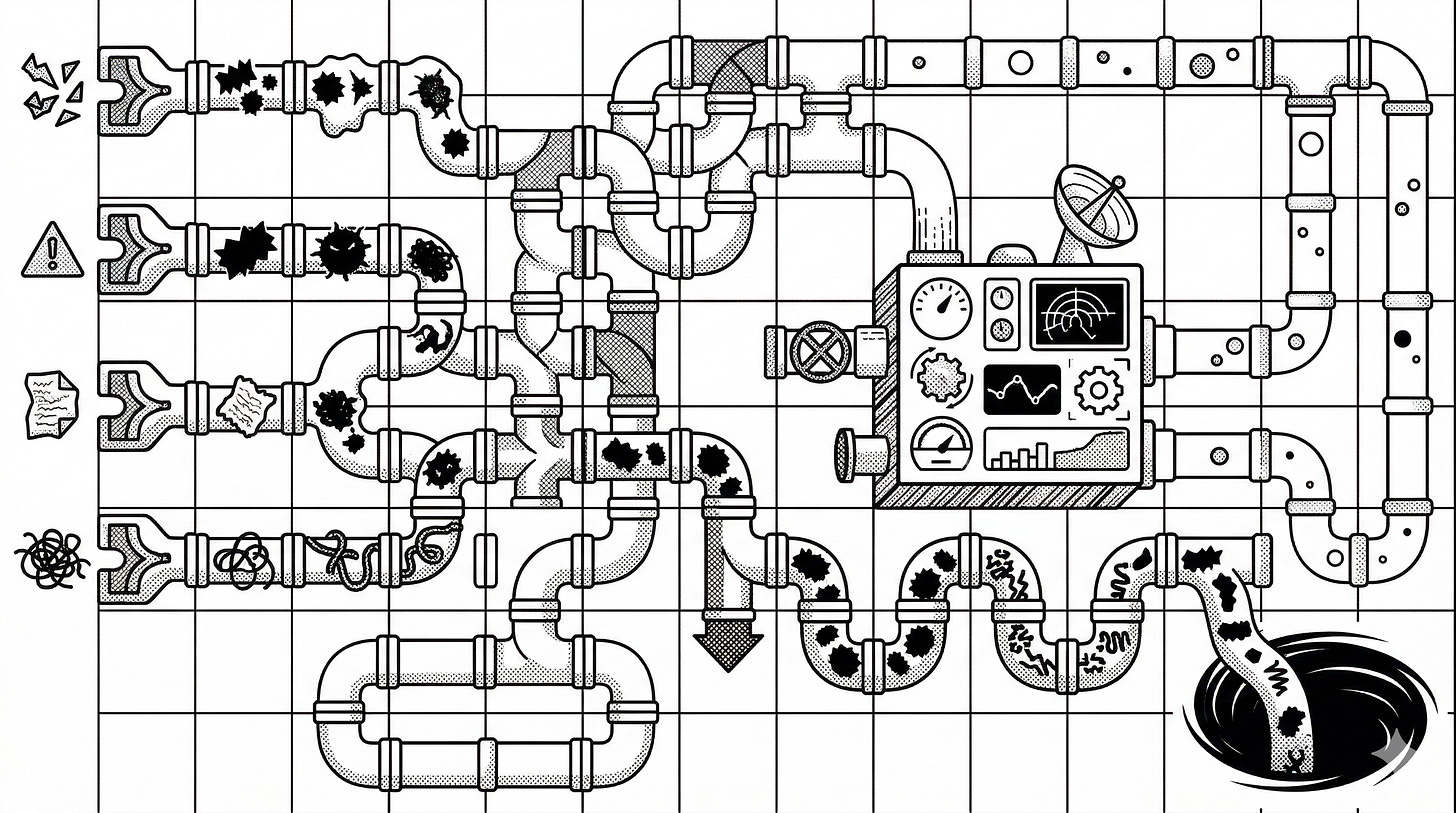

These disasters share a single root cause: structural determinism. The organizational configuration determined what the system could perceive about itself, which determined the nature of each disaster before it happened. The organizational configuration doesn’t cause the cold weather, the foam strike, or the faulty template but it does determine whether the triggering event is survivable. It constrains what the organization can see, what it can do, and therefore the class of failures that will be catastrophic rather than recoverable.

By “configuration” we mean who talks to whom, what information flows through which channels, what gets amplified and what gets filtered, and who has authority to act on what they know. This communication topology of the organization is not the org chart, it is the living, daily pattern of interaction that people perform when they come to work.

Research supports treating this configuration as the real operating system of any organization. Anthony Giddens’ structuration theory³ showed that social structures are both the medium and the outcome of human practices. People reproduce the structures they inhabit through daily action. Hannan and Freeman⁴ demonstrated that organizations resist change at rates that increase with age and complexity. Soda and Zaheer⁵ confirmed this specifically for communication networks: prior network ties persist long after formal reporting structures have been altered. Chris Argyris⁶ showed the specific mechanism by which this self-reproduction becomes dangerous: organizations develop systematic patterns of information suppression that protect individuals from embarrassment and prevent the organization from learning.

That organizational configuration has three properties that explain every disaster in this dataset.

Dynamic 1: Configurations Filter Their Own Feedback

Every organization generates information about whether it’s working. Customer complaints, system alerts, test failures, near-misses, and engineering concerns are all feedback signals. But the same organizational structure that produces the work also determines which feedback signals reach decision-makers and at what fidelity.

This is the most dangerous property of organizational configurations: the structure that needs the feedback is also the structure that filters it. Each layer of hierarchy, each departmental boundary, each contractor interface applies a filter. What starts as “we should not launch” becomes “there are some concerns” becomes “engineering has reviewed and is comfortable.” The people at the top make decisions in a fog they don’t know they’re in because the configuration has done exactly what configurations do. Alex Pentland’s work on social physics⁷ demonstrated this empirically: information flow patterns in networks are inseparable from the quality of collective decisions, and the network’s structure determines which information flows, to whom, and at what fidelity.

The pattern was present in every case in the dataset.

The Challenger teleconference is the purest example. Morton Thiokol’s engineers recommended against launching. That recommendation was never communicated to the Level I decision-makers. At each relay point, someone applied a filter: the engineers’ certainty was questioned, the data was reframed as “inconclusive,” and the recommendation was reversed when a senior executive told an engineer to “take off your engineering hat and put on your management hat.” The configuration determined what the top of the organization could see.

The Columbia Debris Assessment Team knew the foam strike was dangerous. They requested satellite imagery of the wing. That request was killed by middle management. One manager remarked, “I really don’t think there is anything we can do about it anyway.” The configuration preemptively filtered the inquiry itself. The team wasn’t told they were wrong. They were told the question wasn’t worth asking.

Three Mile Island reveals inter-organizational filtering. A nearly identical valve failure had occurred at the Davis-Besse plant 18 months earlier. The operators there had figured it out. But there was no mechanism for sharing this near-miss across the industry. The TMI operators were flying blind because the feedback from a prior failure existed in a different configuration and never crossed the boundary.

Equifax had an internal audit in 2015 that identified systemic gaps in their patch management process. It was an honor system with no enforcement, an incomplete asset inventory, and unresolved vulnerability backlogs. The audit existed. The findings were documented. But the configuration between “audit finding” and “operational change” was so attenuated that the gaps persisted for two more years, until attackers exploited a known Apache Struts vulnerability that had gone unpatched because the notification was sent to an outdated distribution list. An expired SSL certificate then blinded their monitoring tools for 78 days.

HealthCare.gov received 18 written warnings over two years that the project was off course. A McKinsey report in spring 2013 identified more than a dozen critical risks. CMS officials who raised concerns were ignored. The White House pressured CMS to launch on schedule regardless. The configuration filtered the warnings not through incompetence but through political structure. The leaders that needed to hear “we’re not ready” were the same leaders that had publicly committed to the date.

Deepwater Horizon’s negative pressure test returned 1,400 psi when it should have returned zero. The crew invented a physically impossible explanation they called the “bladder effect” because the well was 43 days behind schedule and they were afraid of the consequences of further delay. The data was unambiguous. The organizational configuration reinterpreted the information.

Chernobyl’s control rod design flaw was known to the chief designers and classified as a state secret. The operators were unaware that the emergency shutdown button could act as a detonator under certain conditions. The configuration had determined that the people operating the reactor could not know the thing that would kill them.

Boeing’s internal emails described “Jedi mind tricking” regulators into accepting that MCAS did not need to be in the pilot manual. The FAA had delegated certification authority to Boeing employees under the ODA program. Boeing was certifying its own work. The oversight function shared the configuration of the function it monitored.

Research on psychological safety⁸ identified that when speaking up carries perceived risk, people who detect problems delay reporting them. The gap between “first knew” and “first reported” is the cost of the organizational configuration’s filtering, and it can be measured in hours, days, or fatalities.

The pattern is always the same: the information to prevent the disaster existed inside the organization. The Thiokol engineers had it. The Columbia DAT had it. The Equifax auditors had it. The McKinsey consultants had it. Knight Capital’s system generated 97 automated error emails before markets opened. In every case, the organizational configuration filtered the signal before it reached the person who could act on it.

Dynamic 2: Configurations Reproduce Themselves

Organizational configurations don’t persist because someone enforces them. They persist because people perform them. Every day, people come to work and repeat the meetings they attend, the channels they use, the norms they follow, and the shortcuts they take. The organizational configuration is enacted into existence through daily practice, and in being enacted, it becomes the baseline against which all future behavior is measured. This is the core insight of Giddens’ structuration theory³: structures persist not because they are enforced but because they are performed.

This is why organizational drift is invisible from the inside. The configuration performs itself, and in performing itself, it justifies itself.

NASA between 1986 and 2003 is the definitive case. The Columbia Accident Investigation Board found that seventeen years and an entire generation of personnel later the organizational causes of the Columbia disaster were “essentially identical” to those of Challenger. The people were different. The technical trigger was different. The organizational configuration was the same, because the organizational configuration had reproduced itself through daily practice across decades.

This is the mechanism sociologist Diane Vaughan identified as the “normalization of deviance.”⁹ It’s not a decision. It’s a drift. NASA managers saw O-ring erosion on early flights but the secondary O-ring had held and the missions had succeeded. With each successful flight, the deviation was normalized. The O-ring erosion was reclassified from a “design violation” to “in-family behavior.” The organizational configuration reproduced a version of reality where the risk was managed, and that reproduction happened automatically, without anyone choosing it.

Boeing between 2019 and 2024 may be an even more striking case of reproduction than NASA, because the external pressure to change was far greater. After the two MAX crashes killed 346 people, Boeing faced a Congressional investigation, a deferred prosecution agreement with the Department of Justice, more than $20 billion in direct costs, and the most intense public scrutiny an aerospace manufacturer has ever endured. This was an organizational configuration that should have been dismantled but the production pressure overriding quality control, the inadequate internal oversight, and the erosion of manufacturing discipline persisted. Five years later, a door plug blew off an Alaska Airlines 737-9 in flight because the bolts that secured it were never installed. The NTSB investigation found that Boeing’s production system had allowed an aircraft to leave the factory missing critical fasteners with no record of who removed them or why. The configuration had survived a catastrophe that should have been unsurvivable. It reproduced itself not through inattention but through the same daily schedule pressure, diffused accountability on the factory floor, and quality processes that existed in documentation but not in execution. If NASA is the case for reproduction across decades, Boeing is the case for reproduction under maximum pressure to change.

CrowdStrike’s rapid response content updates had been pushed to 8.5 million Windows endpoints simultaneously, with kernel-level access, no canary deployment, and no staged rollout for months without incident. Each successful push reinforced the practice. Nobody decided this was safe. The absence of failure created a comfortable illusion. When a faulty template with a mismatch between 21 defined input fields and 20 supplied values slipped past a broken validator, the result was catastrophic. The organizational configuration had normalized the deployment pattern that made it possible.

Fukushima illustrates reproduction at the deepest level. The Japanese nuclear industry had propagated what the NAIIC report called the “Myth of Absolute Safety.” This myth trapped them: acknowledging risk (by building higher seawalls) would shatter the narrative that justified the industry’s existence. So when 2008 studies predicted tsunamis exceeding 15 meters, TEPCO suppressed them and NISA agreed to ignore them.

Piper Alpha’s permit-to-work system had decayed from rigorous procedure to informal verbal communication. Permits were routinely unsigned. Lord Cullen’s inquiry called the management of safety “superficial.” The organizational configuration had reproduced a version of the safety system that existed only on paper.

Equifax’s patch management failures persisted from the 2015 audit through the 2017 breach because honor-system patching, incomplete asset inventory, and no enforcement were the daily practice. The audit documented what should change. The organizational configuration continued to perform the way it always had.

The Therac-25 removed hardware interlocks because the manufacturer believed software was sufficient. When hospitals reported patient overdoses, AECL engineers responded: “the machine cannot overdose.” The organizational configuration had determined that the organization’s capability to perceive a software defect didn’t exist, because the organizational configuration had defined the software as infallible. Certainty is what a configuration produces when it has successfully filtered all disconfirming evidence for long enough.

The lesson is structural: configurations resist change because they filter the signals that would motivate change. The very information that would cause an organization to alter its practices must first travel through the practices it’s trying to alter. The organizational fail modes can survive personnel changes, reorganizations, and even major disasters.

Dynamic 3: Configurations Determine Capability

The most consequential property of organizational configurations is that they determine what the organization can actually do. What an organization can do is not the same thing as what it intends to do.

Every organization has a mental model of its own capabilities: we can respond to security incidents, we can recover from outages, we can ensure passenger safety, we can oversee our contractors. In every case in this dataset, that self-model was wrong. The organizational configuration had hollowed out a capability that the organization believed it still possessed, and nobody could see the gap because the configuration that created the gap was also the configuration responsible for perceiving it.

HealthCare.gov is the most structurally transparent example. Sixty contracts to thirty-three vendors, with no systems integrator and no integration authority. No single person could see the whole system. No single person could make a binding decision about it. No single person was accountable for whether the pieces fit together. The United States government believed it had the capability to build and launch a health insurance marketplace. The organizational configuration determined that this capability did not exist. The individual contractors were competent, but the organizational configuration had distributed authority so completely that end-to-end ownership was impossible.

Boeing’s 737 MAX shows how a configuration can dismantle oversight capability while appearing to preserve it. The FAA’s Organization Designation Authorization program delegated certification authority to Boeing employees. Boeing was certifying its own work. The FAA believed it had oversight capability. In practice, the organizational configuration had collapsed the distinction between the overseer and the overseen. When Boeing engineers described “Jedi mind tricking” regulators, they were describing a configuration in which the regulator’s capability to independently evaluate the aircraft had been structurally eliminated. MCAS feeding from a single angle-of-attack sensor was not a design mistake made by careless engineers. It was the inevitable output of a configuration where the pressure to avoid simulator training shaped every technical decision, and the entity responsible for catching unsafe tradeoffs was the same entity making them.

Grenfell Tower demonstrates how accountability diffusion produces capability that exists nowhere. The architect, the cladding manufacturer, the insulation supplier, the building control inspector, and the tenant management organization each owned a component. Celotex rigged fire tests by secretly adding non-combustible boards. Arconic withheld data showing their panels performed dangerously. The TMO switched to cheaper, combustible cladding to save £300,000. No one decided to wrap a residential tower in solid fuel. No one had the capability to ensure the building was safe. The organizational configuration had fragmented accountability so thoroughly that safety lived in the gaps between organizations.

Fukushima shows the deepest version: a configuration that makes emergency response capability structurally impossible to build. The Japanese nuclear industry had propagated the “Myth of Absolute Safety.” This myth was a structural trap. Developing serious emergency plans would require acknowledging that serious emergencies were possible, which would shatter the foundation on which the industry’s social license rested. So when 2008 studies predicted tsunamis exceeding 15 meters, TEPCO suppressed them and NISA agreed to ignore them. The organizational configuration did not merely fail to prepare for a tsunami. It determined that preparation was forbidden.

Meta’s 2021 outage reveals how an organizational configuration’s bias towards consolidation and efficiency can limit its own ability to operate. A routine maintenance command disconnected all of Facebook’s data centers. The cascading failure then took down DNS, which took down internal tools, which took down the communication systems, which took down badge access to the data centers. This was not an infrastructure architecture problem that happened to the organization. It was a series of organizational decisions about tooling, about vendor consolidation, and about what “efficiency” meant. Over years these decisions resulted in the company’s ability to respond to a catastrophic failure depending entirely on the systems that had catastrophically failed. The organizational configuration had optimized for efficiency in a way that made resilience impossible.

Knight Capital shows the same dynamic in a financial context. Ninety-seven automated error emails were generated before markets opened. The accumulated decisions of the organizational configuration had produced a system where the capability to stop a rogue trading algorithm simply did not exist. The company lost $460 million in 45 minutes, not because it lacked talented people, but because the configuration those people inhabited had never built the capability to halt what it had built the capability to start.

Piper Alpha illustrates capability decay through configuration drift. The platform had a permit-to-work system designed to prevent exactly the kind of accident that killed 167 people. On paper, the capability existed. In practice, permits were routinely unsigned, verbal approvals had replaced written ones, and the handover between day and night shifts was so informal that the night crew didn’t know a critical pressure safety valve had been removed for maintenance. Lord Cullen’s inquiry called the management of safety “superficial.” The organizational configuration had performed a version of the safety system for so long that nobody noticed the capability had drained out of it.

CrowdStrike’s organizational configuration created a pattern development that did not include a staged deployment capability through a series of serendipitously successful deployments. Rapid response content updates had been pushed simultaneously to 8.5 million endpoints with kernel-level access, no canary deployment, and no staged rollout. The organizational configuration had normalized this practice. When the question “can we limit the blast radius of a bad update?” became urgent, the answer was no because they had never required it, funded it, or built it.

The pattern across these cases is consistent: the gap between what an organization believes it can do and what it can actually do is a property of its configuration. The FAA believed it could oversee Boeing. CMS believed it could deliver HealthCare.gov. TEPCO believed it could operate safely. Meta believed it could recover from outages. In every case, the organizational configuration determined the organization’s capability. The organization’s own feedback filtering (Dynamic 1) ensured that nobody could see the gap until the gap killed people or destroyed value.

The Selection Pressure

The three dynamics describe how configurations behave. But configurations don’t evolve in a vacuum. They evolve under pressure. In every case in the dataset, the dominant selection pressure was the same: the organization’s environment rewarded speed, cost reduction, and output volume, and those rewards shaped the organizational configuration.

Nobody at Boeing said “conceal the safety risk.” The configuration evolved under competitive pressure from Airbus’s A320neo, schedule pressure to avoid simulator training, and financial pressure from rebate agreements with airlines. The organizational configuration that survived was the one that got results to market fastest and that configuration happened to filter safety concerns as a byproduct.

Nobody at NASA said “launch in dangerous cold.” The configuration evolved under Congressional pressure for a 24-flights-per-year schedule. The configuration that survived was the one that launched on time. That configuration happened to invert the burden of proof so that engineers had to prove danger rather than managers proving safety.

Schedule pressure is the atmospheric condition in which pathological configurations evolve. It operates through burden-of-proof inversion: under pressure, the default shifts from “prove it’s safe to proceed” to “prove it’s unsafe to proceed.” That shift is lethal because the person arguing for delay must overcome the organizational momentum that the configuration has built, using data that the configuration has filtered.

Cost-cutting operates through the same mechanism with a different currency. Grenfell saved £300,000 by switching to combustible cladding. BP saved $128,000 by canceling the cement bond log. Equifax saved money by not enforcing its patching policy. Boeing saved millions by avoiding simulator training. In every case, the cost savings were real and immediate, and the risk became less obvious because the organizational configuration filtered the feedback that would have made it visible. This is the mechanism by which configurations convert economic pressure into latent hazard.

An organization can never see its own blind spots and when configurations filter long enough under pressure, they can unwittingly cross an ethical line. Boeing’s “Jedi mind trick” emails were considered strategy instead of negligence. Celotex’s rigged fire tests were fraud masquerading as oversight. A configuration under pressure that has learned to suppress bad news will eventually learn to manufacture good news. The cover-up always costs more than the fix.

The Solution in Plain Sight

The three dynamics create a problem that appears unsolvable. If a configuration filters its own feedback, reproduces itself through daily practice, and determines the organization’s capability to perceive threats, then how can anyone inside the organizational configuration see it clearly enough to change it? The key decision-makers are often the longest tenured and most assimilated to the configuration and therefore the least able to perceive it.

But there is hope. Every organization has a rotating cast of people who, for a short window, don’t share the blind spots of their peers.

A new hire can temporarily see things that tenured employees cannot. They just read the documentation during onboarding, and now they’re watching people ignore it. They were told the organization values safety, quality, or transparency, and now they’re sitting in meetings where schedule pressure overrides all three. They notice the gap between the documented policy and the daily practice because they haven’t yet learned to stop noticing. They are temporarily immune to Dynamic 2. They have not yet been assimilated by the organizational configuration.

It’s important to take a moment here to qualify the value new hires can provide as an early warning system. New hires are not passive observers. They are people under significant social pressure to conform. Asch’s conformity experiments¹⁰ demonstrated that individuals will override their own correct judgment to match a group consensus, even among strangers with no power over them. Van Maanen and Schein’s¹¹ work on organizational socialization showed that new members are actively shaped by the groups they enter, and that the intensity of this shaping is highest in the earliest period. Janis¹² documented the extreme version of this dynamic in his study of groupthink: even senior advisors with decades of experience suppress dissent under group pressure. New hires are more susceptible to these forces because they have the least standing, the most fragile employment situation, and the greatest incentive to stay quiet. And not every new hire has a valid baseline for comparison. Concluding that a practice is dysfunctional rather than an adaptation to local conditions requires relevant prior experience, proximity to the work in question, and enough exposure to distinguish a real pattern from a single data point.

The new hires whose perspective is genuinely diagnostic are not new hires in the generic sense. They are experienced professionals who arrive with well-developed professional standards and have not yet absorbed the local rationalizations. A senior engineer with fifteen years in aerospace can see manufacturing discipline failures that a recent graduate cannot. A hire whose role puts them directly on the factory floor, in the deploy pipeline, or in the incident response rotation can observe practices that someone in an adjacent department may never encounter. And critically, whether any of these people will actually report what they see depends entirely on whether the organization has built a mechanism for capturing that feedback without retaliation. The population most motivated to give socially desirable answers will do exactly that in a culture that punishes dissent.

Most organizations treat this window as an onboarding problem. The questions flow in one direction: Is the new hire settling in? Do they have what they need? Are they going to stay? Managers check in to ensure retention. HR measures time-to-productivity. The entire apparatus is oriented toward assimilating the new hire into the configuration as quickly as possible.

This is a missed opportunity.

The same new hire who hasn’t yet fully adopted the rituals of the configuration is also the person who can tell you where the configuration is lying to itself. They can see the shortcuts that have been normalized, the safety rituals that have become performative, the feedback channels that exist on paper but carry no signal in practice. They can see these things because they are comparing what they were told to what they observe, and they have not yet been trained to resolve the dissonance by updating their expectations downward.

The window closes fast. The same socialization process that makes a new hire productive also makes them blind. I’d speculate that by six months, they have learned which concerns are welcome and which are career-limiting. They have learned the unwritten rules about what gets escalated and what gets absorbed. They have learned to perform the configuration. The perspective they had on day 30 is gone by day 180, and it is likely not coming back.

To help you take advantage of this brief window of insight I’ve included in the appendix a set of questions that could be asked of new hires between days 30 and 60, mapped to the three dynamics. They could be incorporated into existing onboarding surveys with no additional infrastructure. Rather than asking new hires to evaluate systemic properties they can’t yet see, the items are anchored in what they have witnessed, what they have been told, and what matched and what didn’t. The goal is not a new hire’s theory of the organization. It is a record of experienced dissonance, captured before socialization teaches them to stop keeping it. Asking questions like these early and taking the answers seriously will provide you a renewable method for shining a light into the dark corners of your organizational configuration.

The debris of Columbia, the radioactive ruins of Chernobyl, the charred skeleton of Grenfell Tower, and the $460 million that Knight Capital lost in 45 minutes are not monuments to technical failure. They are monuments to the configurations that produced them — configurations that filtered their own feedback, reproduced themselves across decades and personnel changes, and determined that the organizations they governed could not perceive or respond to the threats that destroyed them. The playbook for survival is not better engineering. It is the relentless examination of the configuration itself — because the configuration is the system, and the system is always performing.

References

Grove, A. S. (1996). Only the Paranoid Survive: How to Exploit the Crisis Points That Challenge Every Company.Doubleday.

Van Atta, D. (2019). Bill Marriott: Success Is Never Final — His Life and the Decisions That Built a Hotel Empire.Shadow Mountain Publishing.

Giddens, A. (1984). The Constitution of Society: Outline of the Theory of Structuration. Polity Press.

Hannan, M. T. & Freeman, J. (1984). Structural inertia and organizational change. American Sociological Review, 49(2), 149–164. https://doi.org/10.2307/2095567

Soda, G. & Zaheer, A. (2012). A network perspective on organizational architecture: Performance effects of the interplay of formal and informal organization. Strategic Management Journal, 33(6), 751–771. https://doi.org/10.1002/smj.1966

Argyris, C. (1986). Skilled incompetence. Harvard Business Review, 64(5), 74–79.

Pentland, A. (2014). Social Physics: How Social Networks Can Make Us Smarter. Penguin Press.

Edmondson, A. C. (1999). Psychological safety and learning behavior in work teams. Administrative Science Quarterly, 44(2), 350–383. https://doi.org/10.2307/2666999

Vaughan, D. (1996). The Challenger Launch Decision: Risky Technology, Culture, and Deviance at NASA.University of Chicago Press.

Asch, S. E. (1951). Effects of group pressure upon the modification and distortion of judgments. In H. Guetzkow (Ed.), Groups, Leadership and Men (pp. 177–190). Carnegie Press.

Van Maanen, J., & Schein, E. H. (1979). Toward a theory of organizational socialization. Research in Organizational Behavior, 1, 209–264.

Janis, I. L. (1972). Victims of Groupthink: A Psychological Study of Foreign-Policy Decisions and Fiascoes.Houghton Mifflin.

Appendix: Disaster Reference

Appendix: New Hire Diagnostic

The following items are designed to be administered to new hires between days 30 and 60, while their perspective on the organization’s configuration has not yet been overwritten by socialization. They can be incorporated into existing onboarding surveys with no additional infrastructure. The items map to the three dynamics. They are not about the new hire’s onboarding experience. They are about what the new hire can see that the organization cannot.

Closed items use a 5-point agreement scale: Strongly Disagree, Disagree, Neither Agree nor Disagree, Agree, Strongly Agree. Each dynamic includes one open-ended prompt for qualitative signal that the closed items may not capture.

Feedback Filtering (Dynamic 1)

I have seen examples of leaders inviting input before finalizing decisions.

I know where to go if I notice a problem or have a concern.

When people on my team raise questions or concerns, they are acknowledged respectfully.

In the work I’ve observed so far, decisions seem to be made by the people closest to the work, not just the most senior people in the room.

What we learn from checks or reviews informs how our team works.

(Open-ended) Describe a situation you have observed where a concern was raised. What happened as a result?

Configuration Reproduction (Dynamic 2)

What I was told about how work is done during onboarding matches what I observe in daily practice.

When practices differ from documented guidance, the reason for the difference is explained clearly.

When I ask about a practice that seems unusual to me, the explanation usually refers to a specific reason rather than tradition or habit.

When I ask about the underlying rationale for a process or decision, the explanation is clear and thoughtful.

(Open-ended) Describe something about how work is done here that surprised you or differed from what you expected.

Capability Gaps (Dynamic 3)

For the work I have observed, it is clear who is accountable for the final outcome.

The tools and information I need to do my work safely and effectively are available to me.

I know who to contact when an issue falls outside my team’s responsibility.

When work involves more than one team, roles and handoffs are usually clear.

(Open-ended) Describe anything about how work is organized here that you think could create risk.